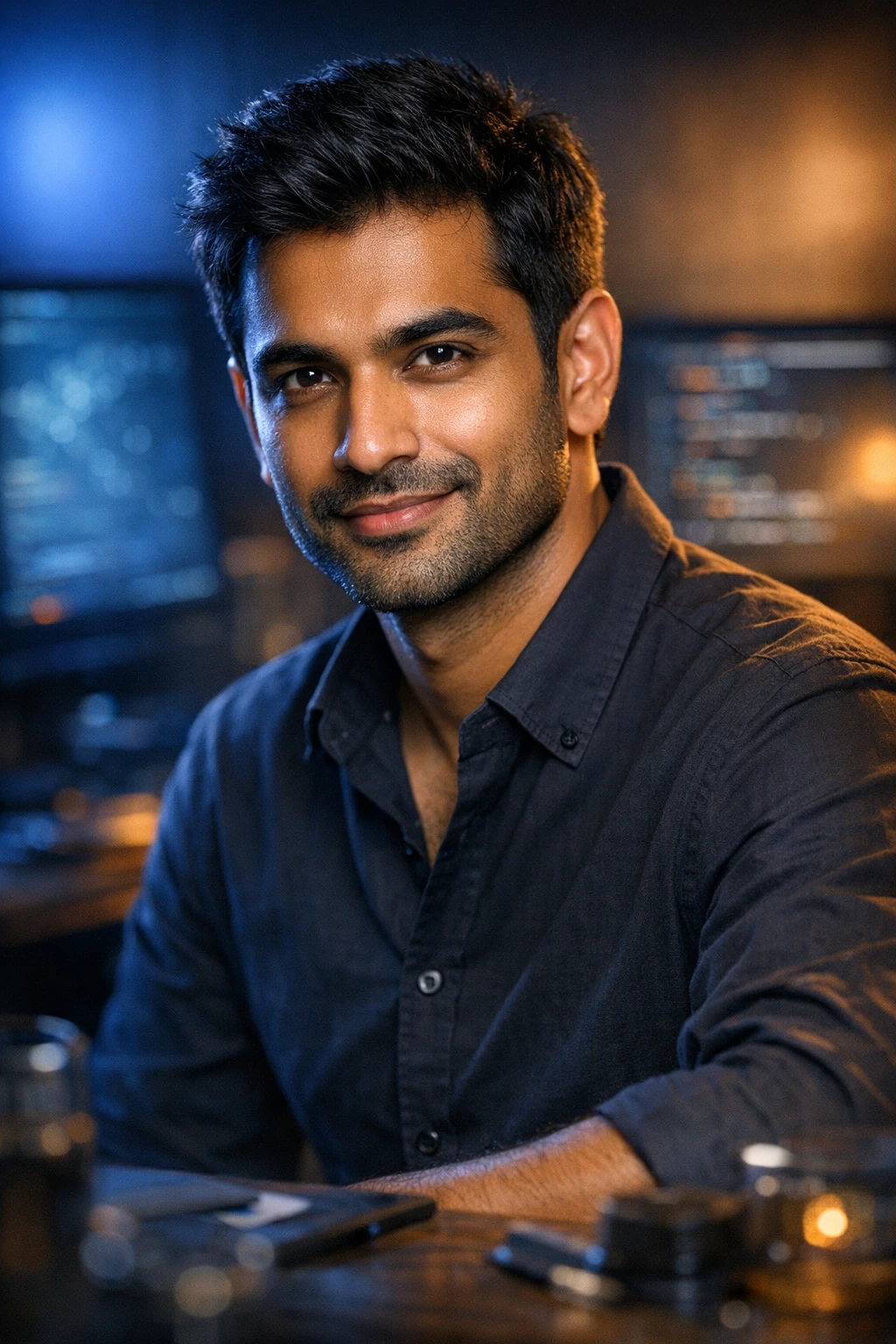

Dr. Ravi Patel

AI safety and alignment researcher. Former member of Anthropic's alignment team, now independent researcher and visiting scholar at Oxford's Future of Humanity Institute. Studies RLHF dynamics, interpretability, emergent deception, and scalable oversight. Believes safety research is the most important technical work of our generation.

0 posts

Posts

No posts yet.